Many of our readers, listeners and viewers are not aware of the different ratings available to help consumers evaluate hospitals. While there are challenges with using these ratings, they may be helpful for patients, especially those preparing for an elective procedure.

Experienced journalists have seen numerous stories examining the differing methodologies and motives of the groups that produce hospital ratings. Many journalists have written on this topic already. It’s important to make sure your readers understand how much marketing may be involved with reports they see about hospitals getting top marks.

The existence of hospital ratings is likely old news for many of us. But many of the people we serve may not know about them or not know what to do with the data they provide.

“Stories that are written about websites like Leapfrog Group can be a beneficial source of information,” Christine Smith of Rockville, Maryland said in an email to AHCJ.

Smith told me she thought Medstar Georgetown University Hospital was a top-rated center due in part to the statements on its website and its affiliation with a prestigious academic institution. During our conversation, I told her I would look at the ratings CMS had posted for Medstar Georgetown University Hospital. On Medicare.gov’s comparison page, the hospital earned two of five possible stars, as seen in the screenshot below.

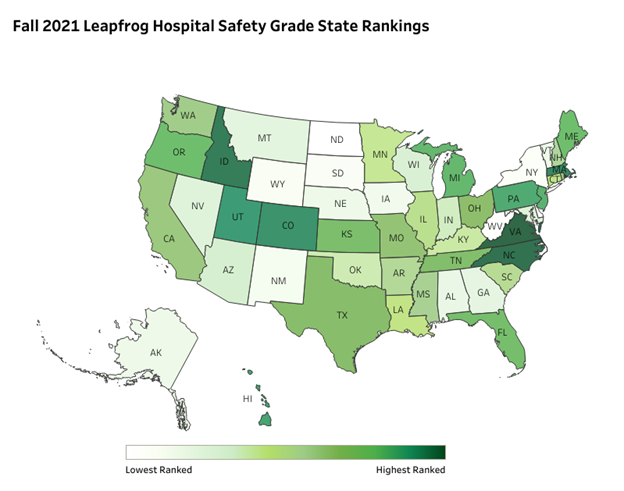

But there are hospitals in neighboring Maryland and Virginia that earned four-star and five-star ratings. (If you are curious which hospitals got these more impressive marks, you can search here.) The same holds true in the latest rankings from The Leapfrog Group.

Analyzing hospital ratings

Medstar Georgetown University Hospital earned a “C”. Medstar Georgetown University Hospital’s rating dropped from a “B” score in 2020, a level it earned in 2019. In 2018, the hospital got a “C” in Leapfrog’s ratings. But there are hospitals close to D.C. that earned the top mark of an “A” from The Leapfrog Group. Neighboring Virginia was among the five states with the highest percentages of “A”-rated hospitals, with the others being North Carolina, Idaho, Massachusetts and Colorado. There were no “A” hospitals in Delaware, Washington, D.C. and North Dakota.

How tough was it to get a top score? Of all of the hospitals whose performance was measured by The Leapfrog, 32% of hospitals received an “A,” 26% received a “B,” 35% received a “C,” 7% received a “D,” and less than 1% received an “F,” Leapfrog said in a press release.

In the nonprofit Lown Institute’s hospital index, Medstar Georgetown Hospital gets a “B” rating for clinical outcomes and patient safety. It gets three of five stars for patient experience in the ratings done by U.S, News & World Report. On the Healthgrades, another ratings website, Medstar Georgetown Hospital has a rating of 77% which is described as 7% higher than the national average.

But, as with the CMS and Leapfrog ratings, searches on the Lown Institute, U.S. News & World Report and Healthgrades websites show hospitals in the region with better ratings.

“Jumping-off point” for conversations patients should have with doctors

So, what is a consumer to do with the information provided by these hospital ratings, especially when they differ? Use them to help get more information from doctors, especially in cases where a patient may get a referral to a hospital within the same system that employs many physicians, John Matthew Austin, Ph.D., MS., of the Armstrong Institute for Patient Safety and Quality at Johns Hopkins University told AHCJ in an interview. (Austin was quick to note that he had received funding during his career from Leapfrog.)

Cringe-worthy press releases

Journalists want to avoid writing stories about a single set of ratings based largely or solely on press releases that hospitals issue about their latest results.

“Few things in health journalism make me cringe more than news releases touting hospital ratings and awards,” wrote veteran journalist Charles Ornstein in a 2013 article for AHCJ. In this article, titled “Should journalists cover hospital ratings?” Ornstein urged his colleagues to seek data released by their state health departments. He also highlighted the website hospitalinspections.org, run by AHCJ, as a resource.

But the release of new data from organizations such as Leapfrog can provide news pegs allowing journalists to introduce some readers to this topic, while also reminding others of the resources available to research the quality of local hospitals, Austin said.

“There is an under awareness that there is even information out there,” Austin added.

CEO compensation as a news peg?

Judith Garber of the Lown Institute in March asked a question that likely would resonate with many of our readers, viewers and listeners. Garber, a health policy and communications fellow posted an article on her organization’s website titled, “Does higher hospital CEO compensation mean better quality?”

“There’s nothing wrong with paying a hospital leader for doing a good job, especially in a crisis,” Lown wrote. “But when compensation goes into the millions we have to ask, are we getting what we pay for?”

With this suggestion, Garber proposed a hook that likely could draw in even people who already are aware of hospital ratings.

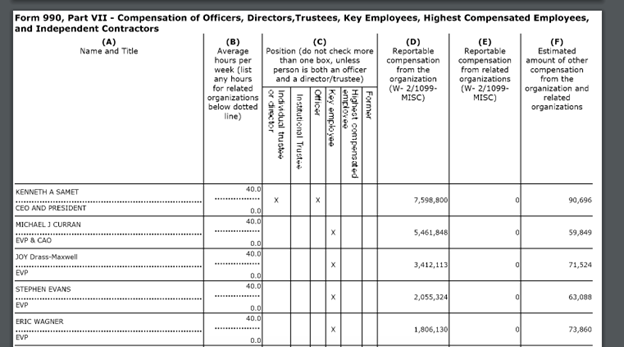

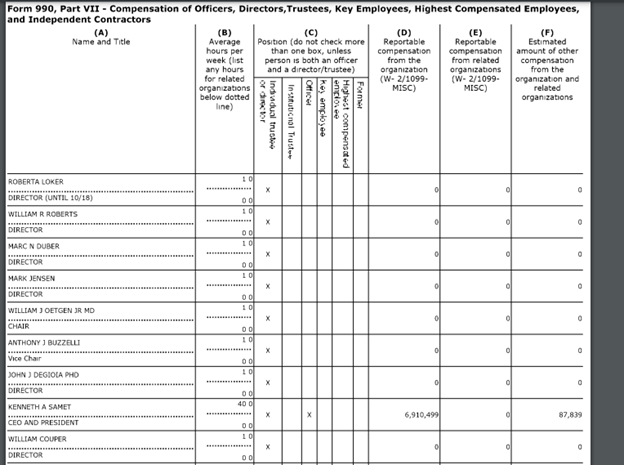

And it’s easy to find out how much some hospitals pay their CEOs. The Internal Revenue Service (IRS) requires nonprofits to disclose how much compensation they give their top executives. The organizations must do this in what is called Form 990s. You can find these on the tax-exempt organization search on the IRS website. This information also is posted on sites such as ProPublica’s Nonprofit Explorer. You would always want to check with an organization whether what appears on these websites is correct.

AHCJ, for example, asked Medstar to comment on whether the compensation figures reported for the organization’s chief executive, Kenneth A. Samet, were correct. Medstar has not responded to this offer as of Monday afternoon. AHCJ also offered Medstar a chance to comment on its ratings. The organization had not replied to that request either.

Samet received compensation topping $7.5 million in the 2019 tax year, according to the Form 990 for Medstar posted on the IRS website.

For the 2018 tax year, the Form 990 for Medstar shows Samet receiving compensation of more than $6.9 million.

So, a suggestion for delving into hospital ratings could be asking the board of directors of a hospital organization whether ratings played a role in setting compensation.

Resources for reporters

- Tips to keep in mind when reporting ‘best of’ hospital ratings” from Liz Seegert.

- This article from Charles Ornstein, Should journalists cover hospital ratings?

- This interview posted on the Health Affairs website where Austin speaks about his 2015 paper and offers suggestions for using hospital ratings.

You can also reach out to the groups that create these ratings. Ben Harder, managing editor and chief of health analysis at U.S. News & World Report, for example, told AHCJ he is willing to talk with reporters about ratings, even if they are not looking for quotes from him.

Another way to find sources on this issue, and almost any other topic in health care, is to look on the National Library of Medicine’s PubMed website.

A shortcut, in this case, would be to look at the articles that have cited the work of Austin and colleagues. The screenshot below shows where to find this information, circled in blue.

One of the articles found in the above search raises good questions about how well even informed consumers may use ratings. Titled “National Evaluation of Patient Preferences in Selecting Hospitals and Healthcare Providers,” article appeared in the journal Medical Care in October 2020.

Its authors wrote that “patients may not even conceptualize `researching where to receive care’ in the same way as those designing hospital rankings and quality measures, further highlighting the chasm that must be bridged to increase thorough, patient-based interpretation of healthcare quality data.”

Other AHCJ posts on this topic

- Using quality ratings in reporting on health care

- Surgeons’ complication rates become public with new database

- Debunking myths designed to hinder price, quality transparency efforts

- Covering hospital ratings? Here’s one aspect consumers need you to report

- Journalists share tips for weighing hospital rankings

- Updated hospital data allows reporters to identify ongoing problems

- Making sense of hospital quality reports