Tag

p-hacking

-

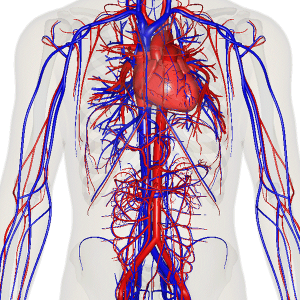

Covering vascular surgery? Watch for selection bias in this database

If you cover medical research related to vascular procedures and conditions, you’ve likely come across studies using data from the…

-

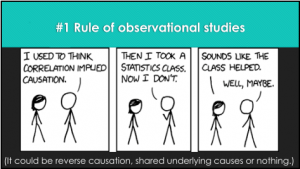

Panelists explain how to begin mastering medical studies

At some point almost all health care journalists will need to cover a medical study or two. When that happens,…

-

Assessing the red flags in a study … annotated

I’m frequently asked on social media for my thoughts on a particular study. In this situation, I thought the quick…

-

P-hacking, self-plagiarism concerns plague news-friendly nutrition lab

Some of the most difficult research to make sense of comes from nutrition science. It is difficult, expensive and labor-intensive…

-

A guide to understanding why science is messy, hard and wonderful

An utterly fantastic long read by Christie Aschwanden at FiveThirtyEight.com, cuts to the chase very early: “Science is hard –…